How to Protect Your AI Development From Hackers

1 April, 2022Artificial Intelligence (AI) has already transformed how many businesses operate and how their customers interact with the world around them. AI is so pervasive that a child can ask Alexa for cartoons that feature dinosaurs – and Alexa’s voice recognition software will provide age-appropriate fare. Other AI-enabled consumer interactions include chat, search engines, Netflix and Amazon recommendations, spam filters, automated language translations, and smart navigation and hazard alerts for cars and trucks and more on the horizon.

The potential benefits of AI for business - faster processing, reduced manpower costs, optimized user experience - are generating a rush to develop applications in businesses of all sizes, in every industry.

Among the goals for a business AI app:

- Improve Efficiency: Developers use artificial intelligence to identify patterns, solve problems, and more efficiently perform tasks that are otherwise done manually

- Machine Learning: Computer algorithms find patterns in a stream of data input and use that data to continuously adjust and optimize desired outcomes

- Natural language processing: Optimized extraction of data from human written or spoken sources, including analytics that recognize and simulate human emotions and movements

- Perception: AI-enhanced software uses input from cameras and other visual or tactile inputs to optimize object and facial recognition

- Search: Uses means-ends analyses and heuristic reasoning to quickly recommend ‘best guess’ results

Despite the potential upsides of AI, management and the AI development team need to be hypersensitive to security concerns: the AI development process is a prime target for hackers. Even a small snippet of malware embedded in your shiny new AI program can lead to an inoperable app and a ransom demand.

AI is expensive – malware, even more so.

AI is a significant investment: the development arc may take a year or more, and a top data scientist can cost $300,000 per year.

However, if malware disrupts your already sizable investment in AI development, the cost is painfully high. Hackers may download sensitive documents, deny user access to your site, and cripple primary software applications. In these cases, the cost to the company soars, and many agree to pay ransomware.

While news reports focus on large ransomware attacks, reports from Coveware and other research firms indicate that malware is a growing problem for small- and medium-sized companies that are less likely to have robust cyber security systems.

It's no surprise that cybersecurity is the most worrisome risk for AI adopters. According to a Deloitte survey released in July 2020, 62% of adopters see cybersecurity risks as a major or extreme concern.

Top Security threats for AI/ML applications.

The Brookings Institute’s Artificial Intelligence and Emerging Technology Initiative (AIET) recently generated a study listing the top five security threats for AI development:

1 System Manipulation: A frequent attack on ML systems is to embed false data inputs so that the algorithms produce false or inaccurate predictions. Since the impact of such an attack can be both lasting and far-reaching, System Manipulation is the greatest AI security threat.

2 Data Corruption: ML systems rely on large data sets – so it is critical that data is protected. Hackers can attack by corrupting or “poisoning” data during the ML process.

3 Transfer Learning Attacks: Most ML systems leverage a machine learning model which is pre-trained to fulfill designated purposes, so an attack on the training model can be fatal to an AI system. Security teams must be alert to unanticipated machine learning behaviors to identify this type of attack.

4 Online System Manipulation: Hackers can mislead ML machines with false inputs or gradually retrain them to provide faulty outputs. Robust security measures include system operations protections and maintaining records of data ownership.

5 Data Privacy: Safeguarding the confidentiality of large volumes of datasets is crucial, especially for data that is built into the machine learning model itself. Attackers may launch inconspicuous data extraction attacks which place the entire machine learning system at risk. Companies must safeguard ML systems against data and function extraction attacks.

The Brookings Report concluded that “Increasing dependence on AI for critical functions and services will not only create greater incentives for attackers to target those algorithms, but also the potential for each successful attack to have more severe consequences.”

How does hacking work?

Hackers use AI, too. Hacker software bots search for a downed firewall or an unprotected network, gaining entry when the development team is accessing and archiving documents or working collaboratively on work-in-progress files.

Once malware enters your team’s collaborative environment, it seeks an unprotected file – a line of code, a data set, an analytics formula, a new jpeg – and implants a ‘ghost’ file. Since the malware looks and acts like a legitimate file, a cursory examination is unlikely to spot the intruder. More likely, the first time you realize that you have been hacked is when you are locked out of access to your AI development folders and files and receive a note demanding ransom.

AI development is a favorite target for hackers.

Machine learning (ML) systems, the core of AI development, are rife with vulnerabilities. Since some of these vulnerabilities require little or no access to the development team’s system or network, hackers have multiple opportunities to penetrate and install malware.

Machine learning vulnerabilities permit hackers to:

- Deny Access: Hackers infiltrate the development team’s operating system and deny access

- Create false algorithms: Hackers manipulate the machine learning systems’ integrity, causing them to make mistakes

- Expose confidential information: Hackers exfiltrate proprietary information and hold data and documents for ransom

AI’s use of Big Data creates hacking opportunities.

A critical aspect of AI is the use of Big Data. The development process uses vast reservoirs of data that are harnessed to create algorithm formulae that make predictions and provide insight, a process that is called “data modeling.” A company’s ability to harness Big Data is a determinant of how effective its AI software will work and whether the company gains a competitive edge.

AI and ML require more data, and more complex data, than other technologies. Data is often stored in the cloud, which itself can be secure, but as the development team accesses and shares files, the data is potentially vulnerable. For example, researchers in healthcare have found that small changes to digital images, even those undetectable to the human eye, can cause the AI algorithms to misclassify the images.

AI’s collaborative process creates vulnerabilities.

The AI development process is highly collaborative: various team members work on documents, update data sets, and generate analytics. Hacking malware monitors the development process as team members share files and access archived documents and data. Work-in-progress collaboration, as team members generate new data or documents in processing applications like Excel and Word, are especially vulnerable to hacking.

Since the development process likely includes third-party experts who are in remote locations, the overall development process becomes dependent on the quality of security measures by these off-site collaborators.

Work-in-progress documents are extra-vulnerable.

A favorite point of access for hackers is when team members collaborate on works-in-progress – data sets, formulae and analytics, written documents – or access and archive documents into folders or databases.

Hackers seek vulnerabilities in Word documents, Excel spreadsheets, data analytics reports, and videos, designs, and images that are undergoing review and alteration. Another point of attack is malicious attachments or links embedded in a collaboration email. And unless your third-party experts have first-rate security, you are providing an additional entry point for a hacker.

A common hacker approach is to re-encrypt work-in-progress files at their end points or extensions. Examples include:

- Database files ending with .sql, .accdb, .mbd, and .obd

- Microsoft files ending with .PPT (PowerPoint), .doc and .docx (Word), .xlsx, .xlsm, .xlsb, .xltx, s.xls, .xlt, and .xml (Excel)

- Media flies ending with .mp4, .zip, .rar, and tar

- Imaging files ending with. ssd, .raw, .svg, and .psd

Other common hacker points-of-attack:

- Testing Tools: Gaining access to Active Directories and evading signature-based antiviruses via Cobalt Strike, Metasploit, or Mimikatz, and other testing tools

Ensure AI development security with a VDR and DNFP.

To defend against hacking, a development team needs an ultra-secure environment for both archived documents and data files, and for work-in-progress collaborative documents. Fortunately, the ShareVault offers both.

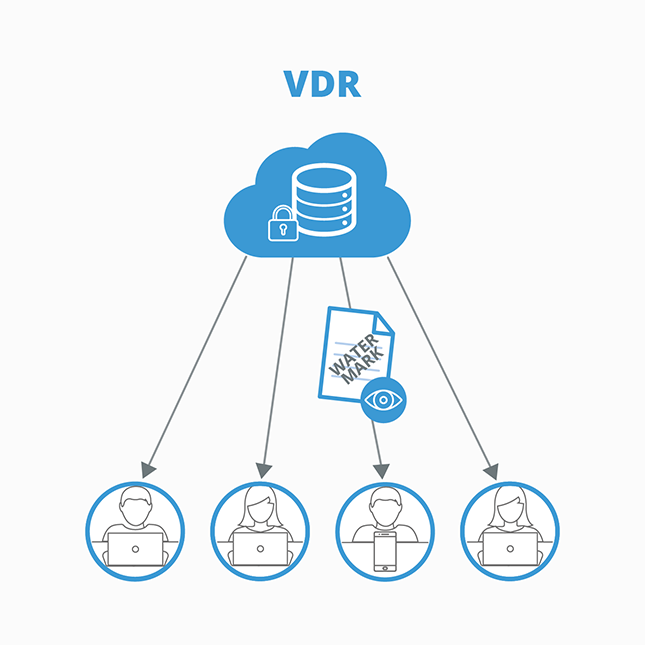

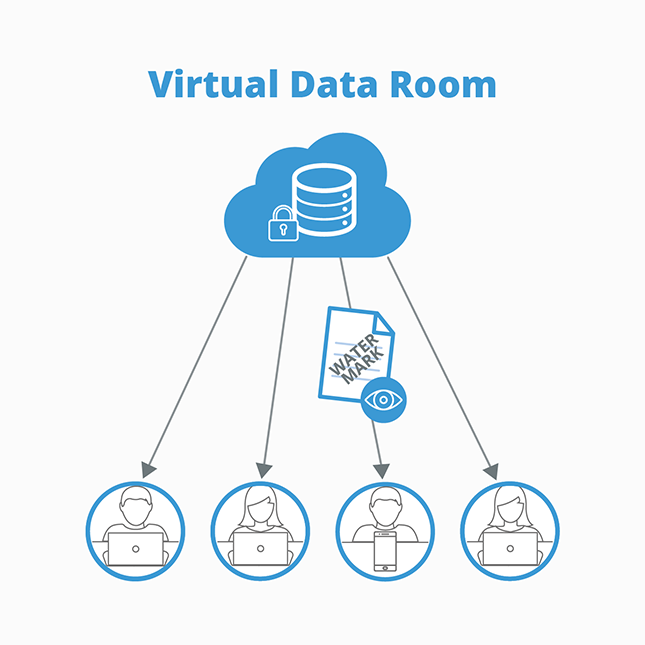

For archiving and sharing documents, use a Virtual Data Room

The best way to ensure the confidentiality of your AI development process is to use a virtual data room, or VDR. A VDR like the ShareVault platform is an ultra-secure repository that protects documents, data, and team member identities during development.

In the ShareVault VDR environment, the development team leader initiates the process by identifying team members and establishing permissions that provide access for your team and for third-party collaborators.

Once permissions are set:

- Authorized participants can access documents in the VDR from any browser or device, at any time, from anywhere

- Protected documents are AES-256 bit encrypted and can only be opened with an active ShareVault connection

- The development team leader can specify each participant’s rights to view, print, save, or copy. Rights can be limited with an expiration date/time and documents can be remotely “shredded” even for files that have already been downloaded

ShareVault’s ultra-secure VDR environment protects confidentiality even for participants in remote locations.

ShareVault provides fast access to archived documents.

Documents uploaded to a ShareVault virtual data room are secure and can be quickly located using ShareVault’s powerful search functions. Search results are sorted by relevance, with synopses showing how the searched keyword(s) are used in context.

As users search, they can view “zoomable” thumbnails that provide a quick peek of a document’s first page and use “infinite scrolling” to quickly scan a document to determine its relevance. Filters allow search by document name, file type, date and publishing source.

As an added help in organizing, a document can be “tagged” using ShareVault hierarchical tags feature. Tagged documents display a crosslink to where a document may reside in a separate folder. These tags can be revised and reordered at any time using a simple drag-and-drop process, without manually uploading the document to each folder/subfolder.

For work-in-progress documents, use Dynamic File Protection

A unique ShareVault capability is Dynamic Native File Protection (DNFP), which protects work-in-progress documents, like those created in Word, Excel, PowerPoint, Photoshop, Illustrator, AutoCAD, SolidWorks, Cadence, and other productivity and design documents while they are in development and when they are shared – anywhere in the world. DNFP protects files regardless of how they are stored or shared by email, Skype, Slack, DropBox, Google Drive, Microsoft 365, Zoom, et al.

Work-in-progress document revisions can be dated and stored to create a secure central repository, including an archival folder with documents ready for final inclusion in the AI app.

ShareVault’s DNFP includes auto-updates, to ensure users always have the latest iteration of protection.

Benefits of ShareVault Dynamic Native File Protection

- No learning curve: Team members don’t change their behavior to use DNFP - in fact, they won’t know the document is protected by ShareVault DNFP unless they try to open the file from an unauthorized device, or if an unauthorized person tries to log into the file

- Hacking and Ransomware protection: DNFP files require an authorized user or an authorized device, blocking any third-party attempts to hijack a file

- Works on any authorized device: DNFP works seamlessly on mobile devices as long as the user has been authorized for that device

- No file size limits: DNFP provides protection for any file, from an 87k Word doc to a large CAD file

- The only Dynamic Native File Protection available: ShareVault is the first software firm to offer Dynamic Native File Protection!

ShareVault is a smart AI Dev investment.

Given the considerable investment a company is making in developing an AI app, the cost of ensuring the security of the process is critical. Fortunately, that cost is not high: ShareVault’s VDR environment and Dynamic Native File Protection are both available with a ShareVault Enterprise subscription.

Focus on the AI development process, not your security!

ShareVault provides an AI development team with advanced security throughout the development process, including for work-in-progress documents. With ShareVault, developers can focus on creating groundbreaking new AI-enabled features and functions for their company and their customers. Visit ShareVault online or contact us to learn more!